Thunderclap, the Newsletter of Rolling Thunder

Computing

Volume 7, Number 1, Summer 2005

In this issue:

Feature Article: A Security Hole in

ClickOnce Deployment.

Marks! I ha' marks o' more than burns - deep in my

soul an' black,

An' times like this, when things go smooth, my wickudness comes back.

The sins o' four and forty years, all up an' down the seas,

Clack an' repeat like valves half-fed.... Forgie's our trespasses.

-- Rudyard Kipling, forecasting Microsoft repeating their

mistakes

“McAndrew’s Hymn”, 1894

Microsoft is about to make a major mistake in their user security model. For the last 3 1/2 years, since Bill Gates's "Trustworthy Computing" e-mail of January 15, 2002, they've been trying to convince the world that they've raised security to their highest priority. A large part of that is making applications secure by default. Instead of shipping applications in an unlocked state and expecting administrators to lock up the things they care about protecting, Microsoft started shipping applications in a secure state and requiring admins to unlock the pieces they need to use, as anyone who's ever tried to start up a web server on Windows 2003 has discovered. Because all humans, even professional administrators, are lazy, this was a good strategic change.

Unfortunately for all of us, Microsoft is about to disregard this strategy in a very visible, public, and dangerous way. They're repeating the mistakes of the Authenticode debacle from ten years ago, at a time when there's much less excuse for and tolerance of such foolishness. Worried? You should be, especially if you own any Microsoft stock. (As I do. It hasn't done much since I bought it. This isn't going to help.) Unless they reverse this decision before release of the .NET Framework 2.0, they’re going to be slammed in the popular press and will have to retreat under fire within six months. Kipling's poem, quoted above, illustrates that this isn't a new problem for humanity.

Sandboxing Web Applications

Rich client programs provide a better user experience than browser-hosted applications. The difficulty is that they are harder to install correctly and safely on the users' machines. You are probably familiar with Zero-Touch Deployment (hereafter ZTD, sometimes also called No-Touch Deployment) for Windows Forms applications. You publish a Windows Forms application to a web server. When the user requests the application, via shortcut or link or typing it into his browser, the forms app gets downloaded to the client PC and runs there. Since it runs within the .NET Common Language Runtime, the CLR's Code Access Security (CAS) system restricts its operations according to the level of trust that it deserves. Applications signed with the strong name of a trusted vendor, like Microsoft, are granted a powerful set of privileges, such as accessing unmanaged code, accessing any environment variable, or accessing any common file dialog box (open or save). Unsigned applications from the intranet zone are given a lesser set of privileges (no unmanaged code, environment variable access restricted to the "username" variable only, use of any file dialog box allowed). Unsigned, untrusted applications from the Internet are further restricted (no unmanaged code, no environment variable access, only the File Open dialog box is permitted).

This sandboxing of downloaded applications is a big advance, very much a step in the right direction. Installing an ActiveX control was and is an all-or-nothing proposition. Either you have full unprotected sex with it, or you don't even shake hands with it, but there's nothing in between. You have to have complete and total trust in the publisher, essentially an impossible hurdle for code you discover on the Internet. CAS, on the other hand, automatically applies condoms of varying thicknesses to each downloaded application, according to the degree of trust you have in the place where it comes from. An administrator can adjust the degree of trust that you have for various applications (trust those signed by this vendor or coming from this trusted site more, and others less), but the installation defaults are quite reasonable. It's more or less safe out of the box for users who do nothing at all. Someone who really wants, out of the goodness of his heart, to write a web application showing naked dancing weasels can do so within the limited subset of privileges available, and the OS will enforce the restrictions so you can run it without risking (much) harm to your system. That's very much how it needs to be, and I have many times commended Microsoft for advancing to this level.

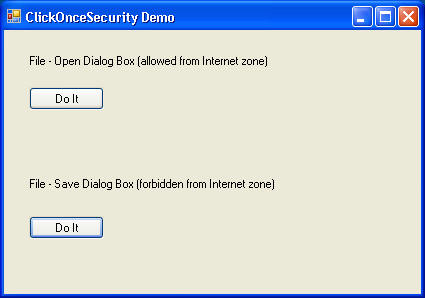

You can see an example of a ZTD application running in its sandbox by clicking on this link, provided you have the .NET Framework 2.0 Beta 2 installed on your machine. It downloads and runs a sample application (shown below) from my web server, which is definitely in the Internet zone.

Clicking on the top button opens up the File -- Open dialog box, which is permitted from this zone. Clicking the bottom button attempts to bring up the File -- Save dialog box. This is not permitted from the Internet zone, so you get a security exception when this happens.

The Problem With ClickOnce

ZTD is easy to do, and CAS makes it safe by default. But as always, it involves

significant tradeoffs. ZTD only works when the user is

connected to the applications server. Since more than half of

PCs sold today are notebooks, disconnected operation is clearly of

great interest to users, which ZTD can't handle. Even when the client PC

is connected to the application server, you have to download the

assemblies that you use every time, so the network traffic and latencies

can be significant. ZTD also doesn't make entries in the user's start menu

or the Add/Remove Programs part of the Control Panel, which means that the program

is harder to use.

To address these user interface deficiencies, Microsoft added

ClickOnce Deployment to the .NET Framework 2.0, code-named "Whidbey", slated for release in late November 2005.

Brian Noyes wrote an excellent article on it for the May 2004 issue of

MSDN Magazine, which you can read at

this link here. You write a Windows Forms

application more or less as before. Only this time, you package it up in a

manifest using Visual Studio or other tools before publishing it to the

web site.

The user installs the ClickOnce applications by clicking on a link as before. Only this time, the app downloads

and installs itself on the client machine and stays there, ready for

disconnected operation. It makes entries on

start menu so the user can launch it, and in the control panel so the user

can remove it. A ClickOnce application can check for updates periodically, downloading and installing them

when found, and have the control panel allow a rollback if he doesn't like

the new version. The user gets rich client applications, which makes his

life easier, with quick and cheap installation, which makes the system administrator's

life easier.

Microsoft tried hard to ClickOnce deployment

safe, and almost succeeded. In this business, that's called failing. The

client application runs in the CLR, so its privilege set is restricted by

CAS according to its trust setting in the same manner as for ZTD.

Good. The user doesn’t have to have

administrator privileges, as he does with msi, to install a ClickOnce

application. Easier and safer, again good. The

ClickOnce installation can't modify the GAC, so it can't hurt anyone that

way. Good again. And the application developer can't modify the download and installation process,

for example, adding custom steps such as data collection.

Microsoft deliberately chose to be more secure, even at the cost of some flexibility. Good, good, and good. ClickOnce

deployment requires an application to be signed with a certificate, and

will refuse to install unsigned applications because they might have been

tampered with. Another good thing, although I am informed that the final

release version will not require this. So far, it looks like they've thought

carefully about what it takes to live in the nasty online world and

designed software to more or less do it pretty well.

Which makes it all the more astounding to my mind that they've included

one glaring, to my mind FATAL, exception. What if the application

wants a higher level of privilege than CAS would grant it? For example,

what if an Internet application wants to access unmanaged code, such as

COM objects? It can place attributes in its deployment manifest that

state that it wants to do these things. For example, an application that

wants full trust will be marked like this:

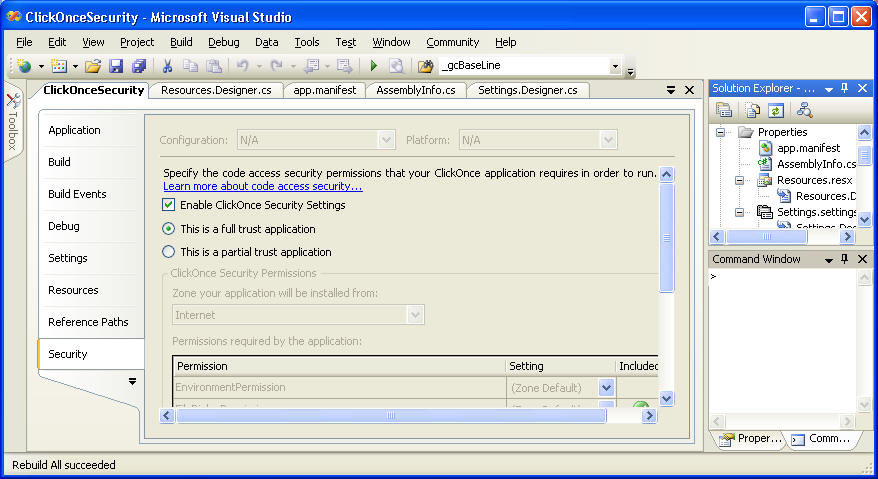

(Note: asking for full trust, as this picture shows, is a bad idea. Almost no application needs this level, and asking for it is a violation of the Principle of Least Privilege. Failing to figure out which permissions you actually do need, and requesting only those, is the mark of a lazy and/or dumb programmer. I'd change the picture, but I'm too lazy.)

The original idea behind security request metadata was to provide an easy way for quick failure. If an application was marked as requiring a privilege that it wouldn't be granted, then the security mechanism would fail it at load time instead of waiting for the user to do three hours work and be unable to save it. Any lady of the evening will tell you that she'd much rather hear about, er, special requests in advance, so she can decide if she'll accept the gig or not, rather than have to refuse the chandelier and waffle iron after an evening of carousing for which she hasn't yet been paid. (Note to hookers and software consultants, who have much in common: get a deposit in advance.)

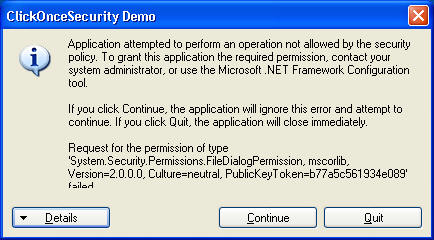

So what happens when a ClickOnce application is marked as requiring a privilege that the user's CAS settings don't allow? Does CAS simply block it and say, "No, you can't touch me there, and damn your eyes for asking?" It does not. It falls back on the tried and UNtrue mechanism, hated by admins everywhere, long since discredited by any security authority -- it prompts the user with a dialog box, as shown below:

If the user clicks OK, and they always do, then the downloaded application is granted the privilege that it has requested, even though his CAS settings would otherwise forbid it. That's not OK. An ordinary user can't possibly know whether an app is safe or not, and he shouldn't be asked to. That's why CAS was developed in the first place, and that's why it was such a big advance. Microsoft put a hell of a lot of effort into dealing safely with untrusted code, and did a decent job of it. But ClickOnce, as it's now constituted, asks the user whether to bypass it every single time a bad guy says that he doesn't like playing in the sandbox. Wrong choice. (Note: if the application is signed by a publisher who is configured as trusted, not just holding a certificate from a root authority, this box does not appear. This is the primary model envisioned for enterprise development, and it's fine. See Brian Noyes's follow-on article in the April 2005 edition of MSDN, available online here.)

By default, a user is prompted to elevate the application's permissions if the application a) comes from the MyComputer, TrustedSites, or LocalIntranet zones, or b) comes from the Internet zone and is signed with a certificate issued by a trusted root authority, such as Verisign. But an administrator at a company with any code security policy at all won’t want her users to see the box, ever. She can modify it via the obscure registry key

\HKLM\Software\Microsoft\.NETFramework\Security\TrustManager\PromptingLevel

But the key isn't even PRESENT in the default installation. An admin who wants to close this new hole needs to add it to the registry, even to the point of spelling it correctly. That's not making things secure by default.

As bad as the former case is, the latter case (the user is prompted if the application is signed by a certificate issued by a trusted root authority) that worries me most. This is the old Authenticode model. It takes users back to the days of 10 years ago, when every ActiveX control asked the user to make an all-or-nothing decision. If anything, it's even worse today, because it's now possible to restrict a component's access, whereas 10 years ago all or nothing was the only choice technically feasible. To allow every single user, by default, the choice of bypassing the security model every single time a bad guy asks, is such stunning lunacy that I find myself spluttering, lost for words in describing it. It's committing malpractice. It's playing Russian Roulette with an automatic. It's not technically required, it's a deliberate design choice that abandons everything Microsoft has ever said or done since their turn towards security three years ago.

Remember what a certificate from a root authority means and what it doesn't mean. It doesn't mean that the app is benign. It doesn't mean that the publisher is a nice guy, kind to children or small animals. If it's working perfectly (and nothing in this life ever is, computing or otherwise), then it means that app was written by the guy who owns the certificate, whoever that might be, to the extent that the root authority has taken to trouble to verify it. That's worth nothing, zilch, nada, exactly 0.00. The bad guys on 9/11 HAD picture IDs. That didn't stop anything, did it? And a certificate from a root authority won't keep a bad guy from trashing your computer. How many of you readers who are parents of daughters (or non-traditional sons) would tell them that it's OK not to insist on condoms as long as their guy has a driver's license? That's what this box is asking.

LATE BREAKING CHANGE: I am informed that the final release will not even require a signature, as Beta 2 does. If this does turn out to be true, it would bother me less than than you think. As the previous paragraph just stated, they're not worth much today. In this case, any random stranger application can ask the user, "You don't know me, but do you mind if I take off my condom? I promise I'm OK." Anyone who thinks that the global transmission of AIDS is a serious problem will have to consider this a bad idea.

I've taken the same client program that demonstrated ZTD and packaged it for ClickOnce. You can download and install it by clicking on this link (if you trust me, that is. And if you believe that, then the check is in the mail, I'm from the government and I'm here to help you, I'll still respect you in the morning, and oh, no, that's a cold sore -- honest!). After it installs, check your start menu and you should be able to run it. Now both buttons should bring up a dialog box; the top one because it is allowed for the Internet zone from which the app came, the bottom one because you clicked the button that allowed it the greater level of privilege.

I don’t have a problem with this functionality existing, as long as it's turned off by default. If someone wants to do the work and dig around to open up this security hole, God bless him. But for unsuspecting users to be asked, constantly, “Hey, this application might rape your wife and beat your children and kill your dog and burn your house down. OK?” is not OK. Even if, ESPECIALLY IF, it's asked by trustworthy applications. Users get so used to clicking OK that they don't think about it any more. See my End Bracket column on MSDN, entitled "To Confirm is Useless, to Undo Divine" for more on the uselessness of confirmation dialogs such as this one.

In a previous edition of this newsletter, I coined Platt's Third Law of the Universe, which states simple that "Laziness Trumps Everything." All human beings are fundamentally lazy and will expend the least possible energy in all cases. Therefore, if something is easy to do, it will be done frequently, whether it should be or not; and if something is difficult to do, it will be done seldom, whether it should be or not. Therefore, a good application design makes the good, safe, and smart things easy to do; and the bad, stupid, dangerous things hard to to. The current implementation of ClickOnce deployment violates this law. The software gods will severely punish architects who fail to learn from their mistakes of ten years ago.

In fairness, I have to say that balancing between the security and usability needs of different user populations is difficult. The level of security that is necessary and appropriate for, say, mutual fund company Vanguard (which manages almost a trillion dollars of customers' money) would be much tighter, harder to use, and more expensive than that of a male teenager (who isn't or shouldn't be risking anything not his own, and can be relied on to do almost anything to see the naked dancing weasels). It leads me to ponder the question of how a general-purpose operating system product can meet the needs of both types of users.

But read this exact quote from Steve Ballmer's keynote address to TechEd 2004 in San Diego. I cut and pasted it from Microsoft's own web site, adding only the one bracketed word to clarify the antecedent of the preceding pronoun. You can read the original here, in the unlikely event that you actually care.

"So on the technical front, on the support front, on the partnership front and on the legal front we've made this [security] absolutely a number one priority. Because, if we don't, not only will you not be doing more with less, not only will we not be being as responsive as we need to be, but the confidence level in all of the people that all of us serve in information technology will decrease, and that's a very bad thing in the long run. We want people to bet more and depend more on information technology solutions to help improve business performance, and we have to make sure that these systems are up, secure and reliable. And you have absolutely my commitment of the importance and priority we place on that at Microsoft."

If you can reconcile the default configuration of ClickOnce, prompting the user to allow access to unsafe code every time a bad guy asks, with Ballmer's speech, please tell me how, because I can't.

It's not hard to fix. One registry key. Microsoft should make it safe by default.

On a macroscopic scale, the reason we have this problem is that it's very hard to know when a piece of code is safe. The question we need to answer is usually: “I never heard of you until I googled for [whatever], now I’m reading your site and wouldn’t mind trying your code, but I have no good way of determining whether to trust you or not.”

What we really need is something like a software “Better Business Bureau” or Good Housekeeping Seal or Consumer Reports, the approval of which (authenticated by mathematical algorithms) promises that the software to which it is attached really is trustworthy and does not come from a bad guy. An Authenticode certificate doesn’t currently mean that. Nobody currently performs such testing and approval. But that would help solve the basic problem of “how do I know can I trust you?” I'll be developing this idea in future newsletters.

In the meantime, give me a call if I can help you out with anything. Until next time, as Red Green would say, "Keep your stick on the ice."

Blatant Self Promotion: Would you buy a used car from this guy?

At Harvard Extension This Fall -- Hard-Core .NET Programming. Meets Mondays at 7:35 beginning Sept 19, Harvard University

My class at Harvard Extension is coming up this fall. As you've probably heard, I've returned to my hard-core programming class, featuring a cumulative project, the approach I pioneered with my SDK class back in 1992. Students from those years still come up to me at conferences and tell me how that deep, persistent knowledge of how things really tick under the hood, not just clicking wizard buttons and saying, "Gee, whiz!", put them ahead of their competitors then and now. If you really want to get your hands dirty and don't mind working hard, this is the class for you. We'll cover the .NET Framework in depth, do a lot of Web Services, and even get into compact portable devices. See the syllabus online, then come on down the first night and check it out.

NEW! 5-day In-House Training Class on Web Services, Featuring WSE 3

Web Services are now sophisticated enough to warrant a topic in themselves, so I've written a 5-day class to do just that. We study web services in depth, from architecture to implementation, using Microsoft's WSE version 3.0. See the syllabus online here, then call me to schedule yours. It can also be shortened to three days if necessary.

NEW! 2-day In-House Training Class, What's New in Whidbey

This class is for programmers familiar with .NET 1.1 and 1.0. It covers everything that's new in the forthcoming Visual Studio 2005, .NET Framework 2005, and ASP.NET 2005. As with all Rolling Thunder classes, it can be custom tailored to your needs. See the syllabus online here, then call me to schedule yours.

5-day In-House Training Class on .NET or .NET for Insurance

.NET is here, and it's hot. It changes everything in the software business, and you can't afford to be without it. Rolling Thunder Computing now offers in-house training classes on .NET, using my book as the text. See the syllabi online here, then call to schedule yours.

ACORD XML for Insurance In Depth, Including Web Services

Insurance carriers, learn ACORD XML from David Platt. This 5-day class will give you everything you need to know to jump-start your application development. Learn about security and plug-and-play, and combining ACORD XML with XML Web Services. See the syllabus online here. It can be customized to your needs.

And now, the moment for which I know you've all been waiting -- the pictures of my two girls. Annabelle is now 5 years old, headed to kindergarten this fall. She's learning to swim and ride a two-wheeler. Who could have imagined this from the tiny baby first announced in this newsletter? Lucy is now 2 1/2, beloved by all. She's a climber and a daredevil, hence the window guards you see in the background.

Thunderclap is free, and is distributed via e-mail only. We never rent, sell or give away our mailing list, though occasionally we use it for our own promotions. To subscribe, jump to the Rolling Thunder Web site and fill in the subscription form.

Legal Notices

Thunderclap does not accept advertising; nor do we sell, rent, or give away our subscriber list. We will make every effort to keep the names of subscribers private; however, if served with a court order, we will sing like a whole flock of canaries. If this bothers you, don't subscribe.

Source code and binaries supplied via this newsletter are provided "as-is", with no warranty of functionality, reliability or suitability for any purpose.

This newsletter is Copyright © 2005 by Rolling Thunder Computing, Inc., Ipswich MA. It may be freely redistributed provided that it is redistributed in its entirety, and that absolutely no changes are made in any way, including the removal of these legal notices.

Thunderclap is a registered trademark ® of Rolling Thunder Computing, Inc., Ipswich MA. All other trademarks are owned by their respective companies.